For the last fifty years, industrial automation has been defined by the caged, rigid industrial robot — fast, strong, precise, but blind and isolated behind safety fences. That era is ending.

The factories that will dominate the next decade look fundamentally different. They are quieter. Their robots work next to humans, not behind them. Their inspection stations catch defects that no human eye could ever see. And critically, they learn.

Three forces are converging right now

1. Deep learning vision has become industrial-grade. Five years ago, deploying a neural network on a production line required a PhD team and months of labelling. Today, software like our Retina AI can train a defect-detection model in minutes with a handful of images and deploy it to a fanless industrial PC that runs 24/7 without a keyboard or mouse. The learning curve has collapsed. The barrier to entry has collapsed with it.

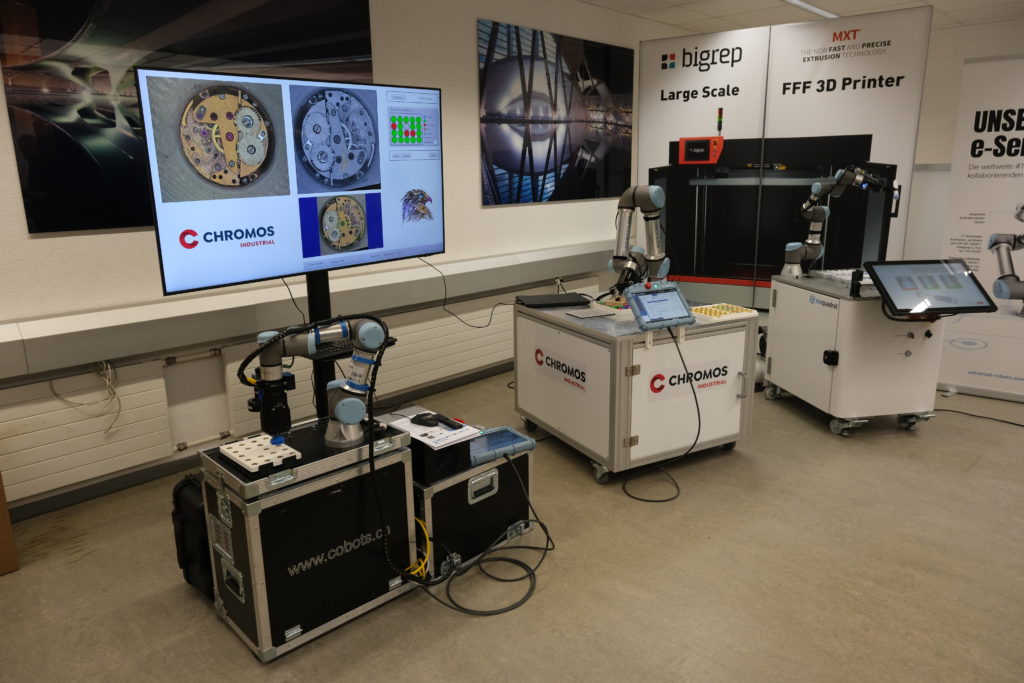

2. Collaborative robots have become flexible enough for real work. A modern cobot like the Universal Robots UR20 can lift 20kg, runs on the power budget of a household blender, and can be redeployed to a new task in an afternoon by a technician — not a systems integrator. Safety standards (ISO/TS 15066) have matured to the point where human-robot collaboration is no longer an experiment, it is a production reality.

3. Edge AI hardware has crossed a price/performance tipping point. An industrial PC with an NVIDIA RTX GPU now costs a fraction of what it did two years ago, and runs at tens of teraflops. Manufacturers can afford to put real intelligence next to their production line, without shipping every image to a cloud.

Why this combination changes everything

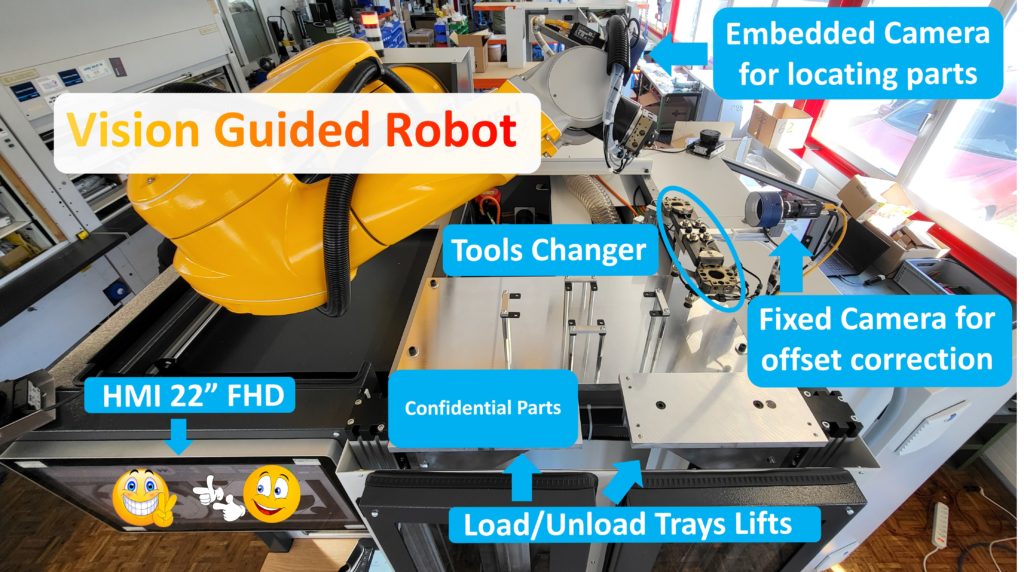

A robot without vision is a dumb manipulator. A camera without a robot is just an observer. Combine them — and you get a closed-loop inspection system that can detect a defect, decide what to do about it, reposition the part, re-inspect, sort, and even correct the upstream process.

Real examples from our own deployments:

- A watchmaking line where a UR3 cobot rotates a luxury case in front of four cameras while Retina AI classifies surface imperfections invisible to experienced human inspectors.

- A medical device manufacturer using vision-guided bin picking to handle plastic injection parts randomly dumped from a vibratory bowl, achieving 99.7% pick success rate on components with no fixturing.

- A glass bottle plant inspecting 800+ units per minute for cracks, chips and contamination — combining 3D SmartRay scans with Retina AI deep learning to find defects traditional 2D vision misses entirely.

None of these were possible five years ago. All of them pay back their investment in under twelve months.

What the next decade looks like

We expect three big shifts between now and 2035:

- Flexibility becomes the competitive advantage, not pure speed. Factories that can reprogram their robots and their inspection models in an afternoon will out-compete those that need weeks of engineering for every product variant.

- Quality control shifts upstream. Instead of catching defects at the end of the line, vision-guided cobots will correct them mid-process — adjusting injection moulding parameters, repositioning parts, or requesting cleaning. The factory becomes self-regulating.

- AI models become a supply chain asset. The company that has built a deep library of trained inspection models for specific parts, materials and defects will have a moat no competitor can quickly replicate.

Where 3HLE fits in

This is exactly the problem space we were built for. As a Swiss certified Universal Robots integrator, authorized Sony Industrial distributor and developer of the Retina AI vision platform, we assemble the whole stack — software, hardware, robots — into turnkey systems that work from day one.

If you want to talk about what AI vision plus collaborative robots could do on your production line, get in touch. We offer free feasibility studies and will give you an honest answer on whether the technology is ready for your application.