Vision-guided pick & place is the killer application for collaborative robots. It eliminates the need for rigid fixturing, lets the same cell handle multiple part variants, and unlocks automation for processes that were previously impossible without dedicated tooling per part.

How vision-guided pick & place works

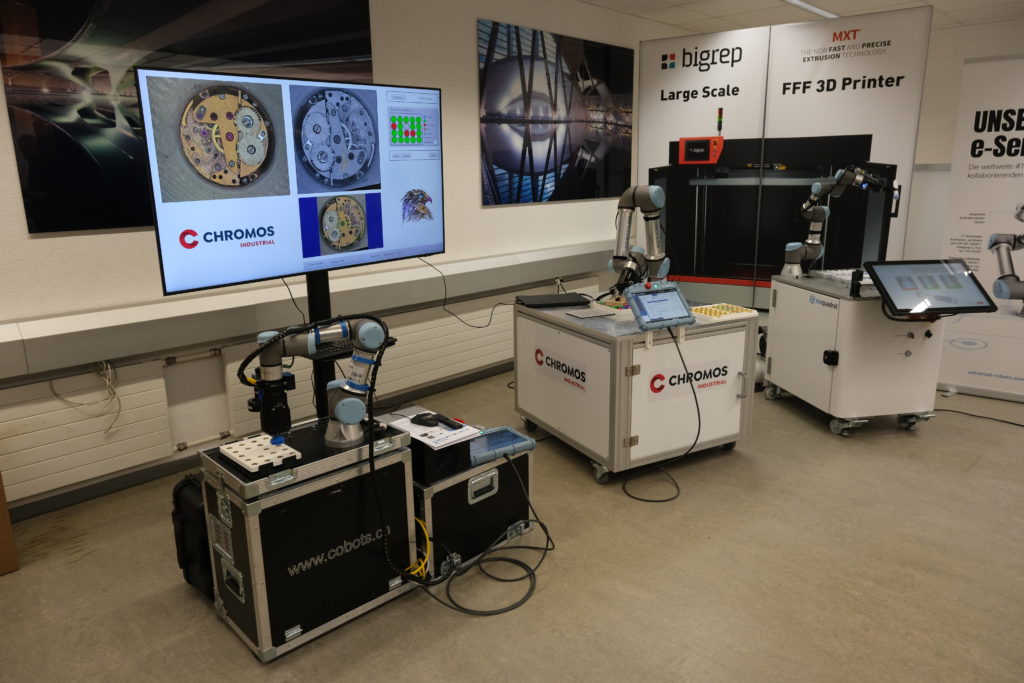

The core architecture is straightforward:

- Camera mounted above the work area or on the cobot end-effector captures images of incoming parts

- Vision software identifies each part and computes its position and orientation in robot coordinates

- Cobot controller receives target coordinates and executes a collision-free path to grip the part

- End-effector (gripper, vacuum cup, magnet) makes the physical pick

- Place station receives the part in a known orientation, often with secondary inspection

Three vision approaches, three price points

1. 2D rule-based vision — the entry level. Works for flat parts on a uniform background. Cheapest to deploy but breaks on lighting changes, part variation, or any orientation other than perpendicular to camera.

2. 2D deep learning vision (our Retina AI platform) — handles part variation, lighting changes, and partial occlusion. Trains on a few hundred images. Works for 95% of pick & place applications. Mid-range cost.

3. 3D vision (structured light or stereo) — the gold standard. Captures depth, enables true bin picking from random orientations, handles transparent and reflective parts. Higher cost but the only viable option for some applications.

Where vision-guided pick & place pays back fastest

- High part variety, low volume per variant — the same cell handles multiple SKUs without fixture changeover

- Tasks where parts arrive in random orientation — from vibratory bowls, conveyor belts, or bins

- Quality-sensitive applications — vision can inspect each part as part of the pick cycle

- Labour-shortage processes — especially night shift and weekend coverage

Common pitfalls (from our experience)

- Lighting underestimated — consistent, controlled lighting is more important than camera resolution

- Cycle time padding too thin — vision processing adds 100-500 ms per pick; this kills throughput if not budgeted

- Wrong gripper choice — vacuum looks elegant but fails on porous surfaces; mechanical fingers are heavier but more reliable

- Ignoring the place side — placing accurately is often harder than picking, especially with deformable parts

- Skipping the feasibility study — we have seen six-figure deployments fail because a single edge case was not validated upfront

3HLE turnkey approach

As a Swiss Universal Robots Certified System Integrator with our own AI vision platform (Retina AI) and a partnership with OnRobot for end-of-arm tooling, we deliver vision-guided pick & place cells where every layer is engineered together: camera selection, lighting, AI model training, gripper choice, cobot programming, safety system, PLC integration.

Most cells we deliver pay back in 12-18 months for typical Swiss manufacturing labour rates. The economics get better with higher product mix or 24/7 operation.

Request a vision-guided pick & place feasibility assessment. We typically need just photos of your parts and a brief description of the application to give you a realistic estimate.